Chapter 7: The Language Revolution - Understanding LLMs

The Language Revolution: Understanding LLMs

As LLMs spread, Ethan, Sophia, and Noah ask how they fit at Minermont; Hazel leads them through tokenization, embeddings, and attention.

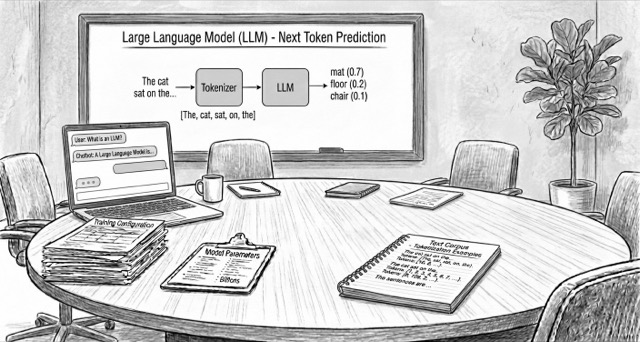

Large Language Models (LLMs) generate text by learning patterns over sequences. This chapter focuses on the foundations: tokenization, embeddings, and how text becomes learnable signals.

7.1 The Word Craftsman: The BPE Tokenizer: Discover the Byte-Pair Encoding (BPE) algorithm. In this interactive simulation, you won't just see how it works—you'll train your own tokenizer. You will understand why this process of "learning a vocabulary" is crucial for an AI model to efficiently handle medical jargon, abbreviations, and the richness of human language.

7.1 Embedding Projector: Visualizing Word Vectors: You will explore how words transform into mathematical vectors in high-dimensional spaces, where meaning emerges from geometry. Using the TensorFlow Embedding Projector, you'll visualize how language models organize medical knowledge, clustering related terms and capturing complex semantic relationships.

7.3 LLM Visualization: Seeing AI from the Inside: You will discover the inner workings of a large language model through an interactive 3D visualization. You can observe how information flows through layers, how the attention mechanism works, and how the model finally predicts the next word. This tool connects everything learned in the chapter in a unique visual experience.

7.4 Interactive Game: Training a Language Model: Experience how a language model progressively improves its predictions as it adjusts its internal parameters. In this educational game, you'll train a small neural network on a thematic corpus, visually observing how values change with each processed example.

7.5 LLM Landscape: The Most Relevant Models: A practical map of today’s main model families (frontier APIs, open-weight models, on-device options) and the trade-offs that matter in real deployments.

7.5 LLM Benchmarks: Current vs. Saturated: A curated guide to the most used LLM benchmarks, which ones still discriminate models, and where to verify leaderboard claims.

Algorithm Pseudocode

- 📝 Word2Vec Pseudocode: Skip-Gram and CBOW architectures, negative sampling, hierarchical softmax, and subsampling techniques.

Mathematical Foundations

- Tokenisation & Embedding Geometry: Byte-Pair Encoding math and embedding-space intuition that grounds the tokenizer simulator and projector demo.

- Universal Approximation Theorem: Why a single hidden layer can approximate any continuous function on a compact set (with key references).

Bibliography and Additional Resources

- 📚 Bibliography: LLMs and Tokenization: Verified resources and references on Large Language Models, transformers, tokenization and the BPE algorithm.

- 📚 Bibliography: Transformers and Attention Mechanisms: Foundational papers, educational resources, official blogs, interviews, and tools on Transformer architectures and their applications.