Chapter 8: From Tokens to Thought

From Tokens to Thought: Building Minds from Text

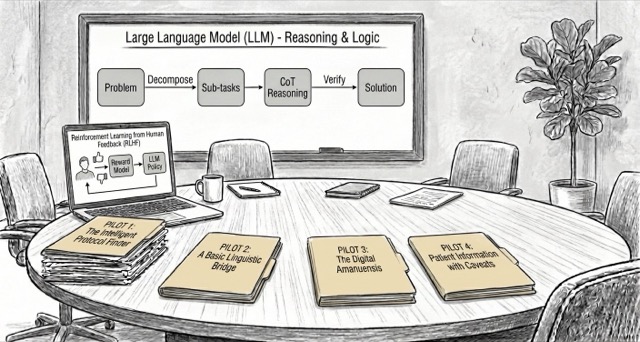

Building on the foundations, the team pilots LLMs for admin and patient communication with Hazel’s insistence on human validation, while confronting bias, privacy, and hallucinations.

Building on the foundations from Chapter 7, the team now focuses on what happens after a model can predict text: alignment with human preferences, reasoning at inference time, and the practical mindset needed to deploy LLMs responsibly.

What Will You Learn?

This chapter focuses on post-training and deployment mindset: learning from preferences (RLHF), reasoning at inference time, and basic safety/evaluation habits.

8.1 When Machines Learn from Our Preferences (RLHF): An interactive simulation of preference-based fine-tuning.

8.3 Beyond Prediction: Reasoning LLMs: What changes when models spend more compute at inference and make steps explicit.

Mathematical Foundations

- 8.1 📐 REINFORCE & RLHF: Policy-gradient intuition and the RLHF pipeline.

Bibliography and Additional Resources

- 📚 Bibliography: LLM Applications and Safety: Resources on prompting, post-training, evaluation, and responsible LLM deployment.